6G-life

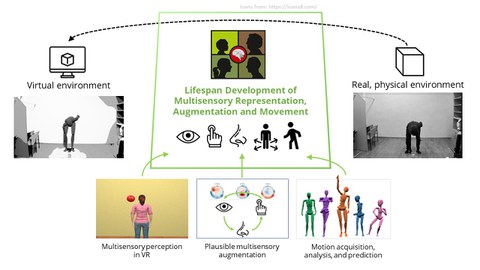

In 6G-life, we investigate impacts of brain development and aging on multisensory perception, sensory augmentation, motion planning and motion execution in virtual reality (VR).

Background

This project belongs to the 6G-life research hub and merges the forces of TU Dresden and TU München in driving cutting-edge research for future 6G communication networks with a focus on human-machine collaboration. By means of our research, we want to provide researchers in engineering and computational sciences with crucial parameters of human factors for designing multisensory, virtual environments for human-human and human-machine interactions.

Approaches

We conduct studies in 3 major research fields to investigate the effects of:

Multisensory perception is a basic requirment for our brains to form cohesive representations of our surroundings and body. Yet, exactly how the fundamentals of multisensory perception translate to virtual environments is not fully known. Additionally, differences in how the young and old brain make this translation still need to be understood. To investigate this we design experiments aimed at comparing the processes of multisensory perception in virtual reality between young and old adults.

Multisensory environments require multisensory solutions in order to create more realistic human-human interactions. This especially applies to affective touch or other sensory modalities such as tactile-olfactory processing. How does the interaction between our senses in real and virtual environments influence our perception and how can we use this knowledge in the development of future technologies?

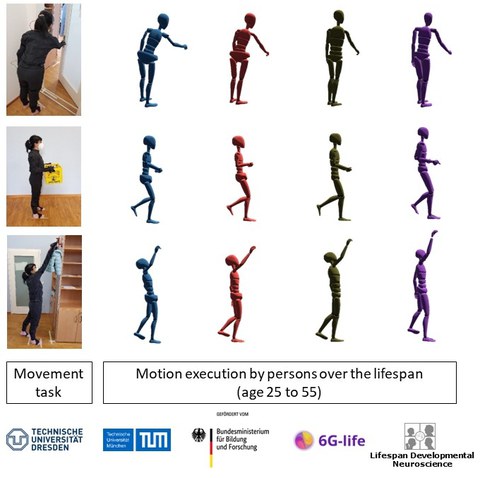

Aging affects cognitive and motor functions of the elderly. On the one hand, exercises is helpful for maintaining physical fitness of seniors; one the other hand, many elderly people experience limitations in their motor movements either when performing daily activities or while doing exercises. Digitalized whole-body movement data is valuable for (i) for developing machine learning (ML) algorithms to detect and diagnose movement problems and (ii) for designing tele-physical fitness or tele-rehabilitation exercise in virtual/augmented reality environments. So far, available open movement databases mostly focus on data collected from younger adults. Thus, we plan to use wearable whole-body sensor suits and gloves to capture movement data of daily activities or exercise movements in a lifespan sample including younger, middle-aged, and older adults and to make these datasets available to the research community as open data.

Currently running studies

Multisensory processing of touch stimuli delivered by a sleeve

In cooperation with:

- Sachith Muthukumarana (University of Auckland, NZ, https://profiles.auckland.ac.nz/pmut288)

- Robert Rosenkranz (Chair of Haptics and Acoustics, https://tu-dresden.de/ing/elektrotechnik/ias/aha/die-professur/Mitarbeiter/robert-rosenkranz)

- Robert Kirchner (PhD student, Chair of Haptics and Acoustics, https://tu-dresden.de/ing/elektrotechnik/ias/aha/die-professur/Mitarbeiter/copy_of_anna-schwendicke)

Effect of a virtual full-body illusion on the representation of peripersonal space in virtual reality

In cooperation with:

Yichen Fan (https://6g-life.de/yichen-fan/)

Demographically representable database of activities of daily living (ADL) of people over the life span, recoded with full-body motion capture suits and gloves

In cooperation with:

- Simon Hanisch (https://ceti.one/simon-hanisch/)

- With the engaged help of the following student assistants:

- Veronica Yolanda Puspita Wardhani (2022/23)

- Alexander Behrens (2022/23)

- Moritz Fuchs (2023)

- Anna Hannemann (2023)

In a subproject TeleTaichi we will set up a virtual environment in which users are able to participate in tele-exercise sessions in which they learn basic Taichi movements from an expert. We will record the participants’ movements during the exercise sessions. Among other factors, we are interested in effects of using movement metaphors to improve motor imageries and remote motor learning. Furthermore, we are interested in using these data for developing ML methods for the classification of motor skill levels after prolonged training and for on-line adaption of the user interface to individual characteristics for specific user groups.

In cooperation with:

Martina Morasso (https://www.theapolis.de/de/profil/martina-morasso

Principal investigator

Staff

Collaboration partners

- Prof. Gianaurelio Cuniberti (TUD, Chair of Materials Science and Nanotechnology, https://www.nano-tud.de/member/gc/)

- Prof. Dr. rer. nat. Stefan Gumhold (TUD, Chair of Computer Graphics and Visualization) (https://tu-dresden.de/ing/informatik/smt/cgv/die-professur/inhaber-in)

- Prof. Andrea Serino (Head of MySpace Lab, University Hospital of Lausanne) https://wp.unil.ch/myspacelab/prof-andrea-serino/

Acknowledgement

The authors acknowledge the financial support by the Federal Ministry of Education and Research of Germany in the programme of “Souverän. Digital. Vernetzt.”. Joint project 6G-life, project identification number: 16KISK001K